In the last couple of years, iOS marketing has been turned on its head. As a result, many marketers are still struggling to measure the performance of their iOS ad campaigns. If you also find yourself in this camp, rest assured that you’re not alone. I’ve talked to some of the world’s biggest brands that still struggle with SKAN performance measurement. The goal of this article is to help you choose the most ideal strategy for setting your conversion values.

Author’s note: I recommend you read through previous posts about SKAN, understand what conversion values are, and look for ways to maximize the expanding conversion value options with SKAN 4.0.

Why conversion values are important

Like many things, in mobile marketing you get what you pay for. If you’re only looking to maximize app installs, you can find inexpensive installs easily — but these installs are likely cheap because they are ineffective at driving value to your business. For example, one marketer found inexpensive installs that turned out to be from a VPN app, and the installers were in a different country than intended. Many marketers have repeated this mistake, and SKAN, unfortunately, increases the risk of falling into this trap.

Before SKAN and ATT, user-level data allowed you to track a single user from an ad click all the way to their in-app purchase. Now, with SKAN, conversion values are the only way to measure post-install performance for users who don’t opt into the ATT prompt.

If you set conversion values to indicate user performance, you’ll be able to send to your ad platforms (networks) signals that contain important additional context beyond the install. These signals will help ad platforms fulfill their purpose more effectively: finding valuable app users.

The work you put into setting SKAN conversion values won’t — and shouldn’t — just apply to iOS marketing. Establishing valuable post-install measurements will help you garner better signals on Android or even mobile web sign-up campaigns. Properly configured conversion values will help drive additional KPIs beyond installs, including engagement, retention, and — eventually — revenue.

Investing in effective conversion value measurement also unlocks an opportunity to build additional layers of fraud protection. It’s comparatively easy for fraudsters to mimic an install; it’s much more difficult for them to mimic a purchase. By directing your budget towards users who purchase, you’ll naturally lean away from fraudulent traffic.

For networks, the earlier the postback, the better

Entire books have been written about campaign measurement, but don’t let the complexity deter you.

The golden metric for marketing is Return on Advertising Spend (ROAS), which tracks the revenue earned from each marketing dollar spent. This may not always be feasible. Development and data engineering resources may be scarce, but don’t ignore performance if you don’t have perfect measurement available. Consider a more basic approach in the interim; a simple proxy of “good” versus “bad” app users is better than nothing. As your measurement models gain complexity, you can fold in more effective signals and sophistication.

Given SKAN 4.0’s multiple later-stage conversion values, it’s tempting to consider using later occurring events to more accurately measure true user value. However, it’s generally better to get an earlier, broader indication of performance versus a later, smaller number of more accurate values. The reason for this is two-fold.

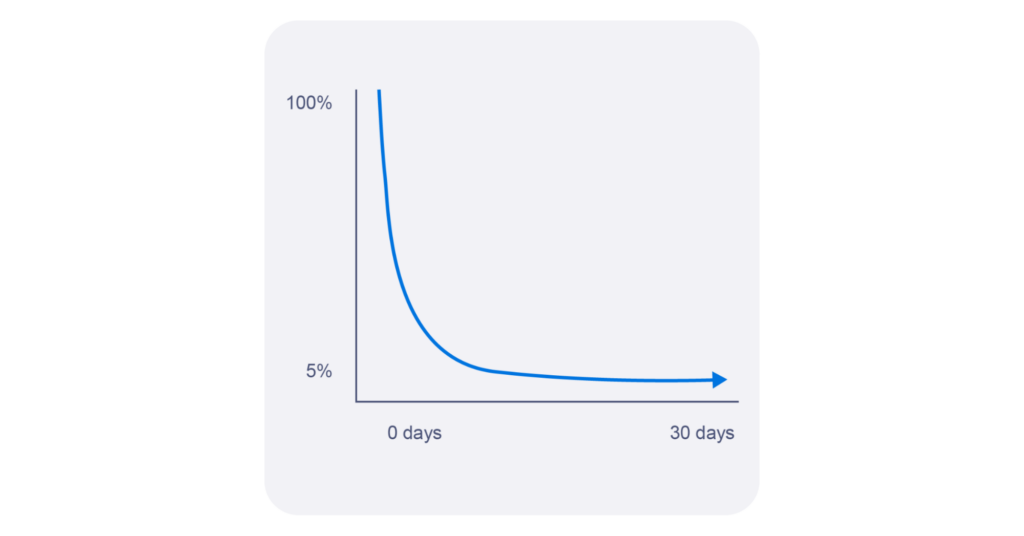

First, when it comes to mobile apps, retention curves significantly degrade the amount of signal density available to users. In the example below, day 0 signal gets 100% of users to participate while day 30 might see only 5% of users participate. That’s a 95% degradation in signal density.

Source: Branch

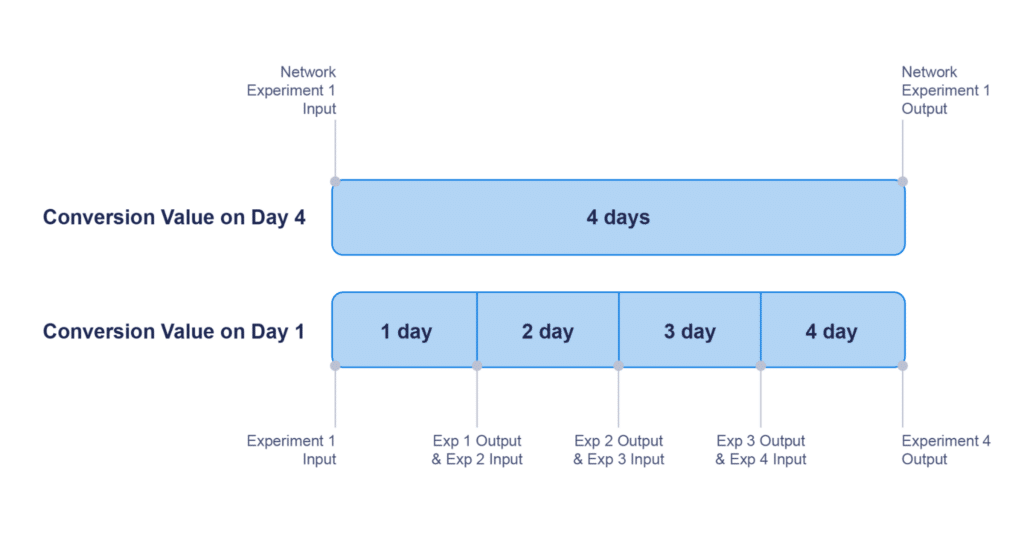

Second, earlier signals give the networks a faster turnaround on the iteration of testing and campaign optimization. Remember: you’re paying ad networks to find users, so they’re competing for your ad dollars. The platforms that find the most valuable users will earn more of your budget. Therefore, ad networks are incentivized to experiment to find new, better targeting methods. If your postback is sent on day four, you’ll have 25% fewer experimentations available than someone who sends a postback on day one.

Source: Branch

In previous SKAN versions, Facebook mandated a 24-hour maximum time for a conversion value postback. At the time this article was published, there is no guidance yet from ad platforms, but rest assured that earlier conversion values will serve your networks more effectively.

Use events to predict future value

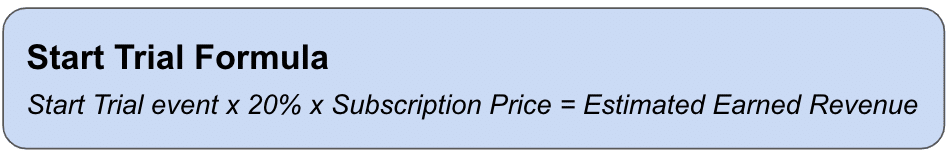

Our advice is not to shy away from making predictions on a user’s value from in-app behavior. Some firms have entire data science teams focusing on predictions, but that’s not always necessary. Revenue-related events like “Start Trial” and “Add to Cart” are ideal to assign as predictors for high-value, high-intent app users. These user actions can be used to approximate resulting revenue with a reasonably high margin of certainty.

For example, if 20% of trials traditionally convert to a subscription, then the “Start Trial” event can serve as a proxy for earned revenue. In the Branch dashboard, use counts of the “Start Trial” event x 20% x subscription price to get an accurate estimation of earned revenue from your campaigns.

This also reduces the complexity of measuring SKAN campaign performance (ROAS).

As your data modeling grows in complexity, you can chain together early-funnel events to create customer pathways with a more predictive LTV.

In the absence of dollar value, look to customer engagement

For many brands, it’s not easy to replicate this formulaic application to earned revenue. The conversion event may happen offline or on the web, or the conversion amount may be too variable. It could also be that not enough users engage, so signal density is inadequate for campaign measurement.

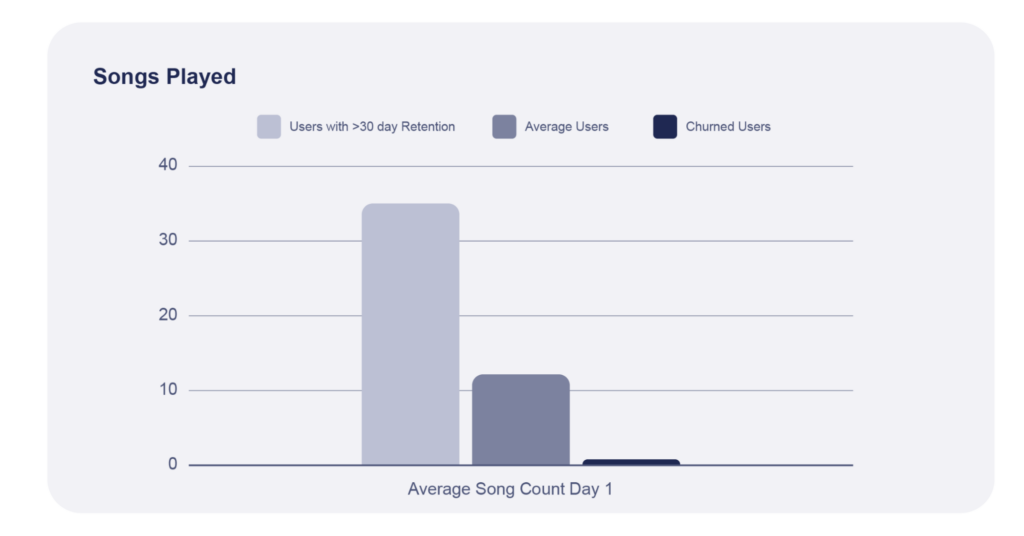

Those who are unable to look at a customer value metric may look to customer engagement as a proxy. This doesn’t mean you need to look at the user’s retention value on day 30. Sometimes, a very simple exercise of measuring first-day activity can help you predict future performance. Commonly occurring events like pages swiped, posts viewed, or songs played can serve as proxies for the future success of a user. To set these proxy event thresholds, simply average day-one totals on cohorts that achieve a goal, versus the average user, versus churned users.

Source: Branch

In this hypothetical example of an audio app, the average number of songs played on day 1 can serve as an indication for 30-day user retention.

Ad networks use your conversion values to optimize your campaigns

Postback delays using the current iteration of SKAN discourage any real-time campaign optimization. But ad networks are still trying to find you valuable users. The reason Meta now suggests broad targeting (in favor of the famously high-performing lookalike audiences) is because they need the latitude to find valuable users across broad swaths of inventory. The criteria they’ll use to judge valuable users? Your conversion values. The earlier, broader, and more accurate your signal, the more effective their ability to optimize.

Keep in mind it’s still early in privacy-centric marketing. You can still optimize campaigns and find valuable channels. You just need to gain confidence in models that use proxies to predict future success. While real-time campaign optimization might be fading into the past, marketers with a reasonably accurate conversion value strategy — one that can predict future values — will be able to predict future campaign performance much earlier in the process.

Branch is your partner on SKAN 4.0

Branch has been investing in our SKAN experience for a long time and we have only begun our work on SKAN 4.0. We’re looking forward to working with you to help you make the most of the opportunities that SKAN 4.0 offers.

Stay tuned for much more news in 2023!