At its core, experimentation in mobile growth is about testing and learning. It’s a disciplined process of trying out changes — a new onboarding flow, a different call to action (CTA), or updated deep-link routing — and measuring how they impact your goals. It’s not just guesswork. It’s how the most successful teams turn intuition into insight, and insight into scalable, measurable growth.

Experimentation is now table stakes for mobile marketers. What separates high-performing teams is not how many tests they run, but how intentionally they choose them — and how quickly they act on the results. The most effective teams run targeted experiments tied to real business outcomes and make clear calls on when to optimize, when to double down, and when to walk away.

Prioritization is what makes experimentation pay off

If you’re a mobile app marketer, you’re likely familiar with this scenario: a backlog full of test ideas, a sprint calendar packed with a zillion tweaks, and yet, no meaningful results. Experimentation without prioritization is just noise.

Industry research consistently shows that experimentation only delivers results when it’s embedded, structured, and focused on outcomes — not volume.

McKinsey research shows that top innovators are 3x more likely to embed experimentation across their organization, enabling faster learning and clearer decision-making. Mastercard reports that 92% of retailers using test-and-learn frameworks generate 10x ROI on average. Gartner adds that a test-and-learn culture moves organizations beyond opinion and toward consistent improvement.

The takeaway is simple: Positive, measurable results don’t come from more tests. They come from better decisions about which tests to run and which ones to stop using altogether.

How high-performing teams actually optimize

Optimization often gets mistaken for perfection. In practice, it’s about making steady, deliberate improvements that compound over time. High-performing teams don’t try to optimize everything at once. They focus on the actions and signals that reliably move the business.

That looks like:

- Prioritizing early user actions that reliably lead to downstream outcomes, such as guiding users from a product page to an app install.

- Using a clear test-prioritization framework, whether ICE (impact, confidence, effort) or a custom model, to decide what earns time and resources

- Aligning KPIs across marketing, product, and data teams so optimization efforts point toward the same goal

- Setting explicit exit criteria so teams know when to double down — and when to stop

What prioritized experimentation looks like in the real world

Some of the world’s most successful brands have adopted a test-and-learn culture along with a heavy dose of prioritization. Here are just a few:

1. SEEK, a leading online employment platform, worked to improve how mobile web users convert to its app by testing specific points in the job-seeking journey. The team experimented with when app prompts appeared, how they were presented, and what message they delivered — including localized language, visual design changes, dismissal behavior, and placement closer to high-intent moments like job-detail views. By scaling the tactics that lifted installs and cutting those that increased bounce or hurt applications, SEEK raised its click-to-install rate from 0.2% to 11%, driving 55x growth in app conversions.

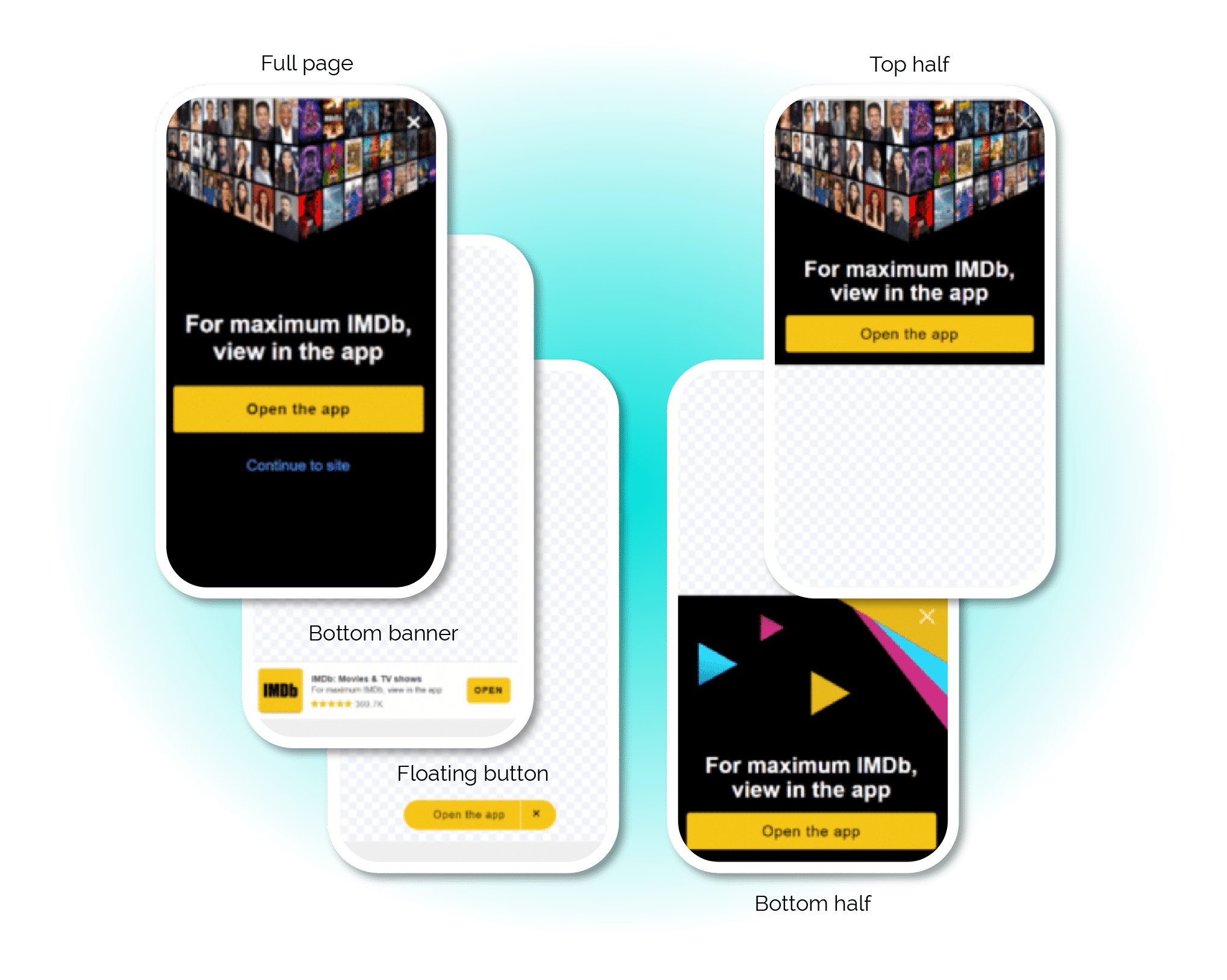

2. IMDb, the world’s leading source for movie and TV information, set out to improve web-to-app conversion from its mobile site. The team replaced a static full-page pop-up with a series of Journeys smart banner experiments, testing formats, placement, and messaging at scale. By quickly identifying top-performing variants and shutting off intrusive or low-impact options, IMDb increased experiment volume 9x and drove a 443% increase in app installs from mobile web.

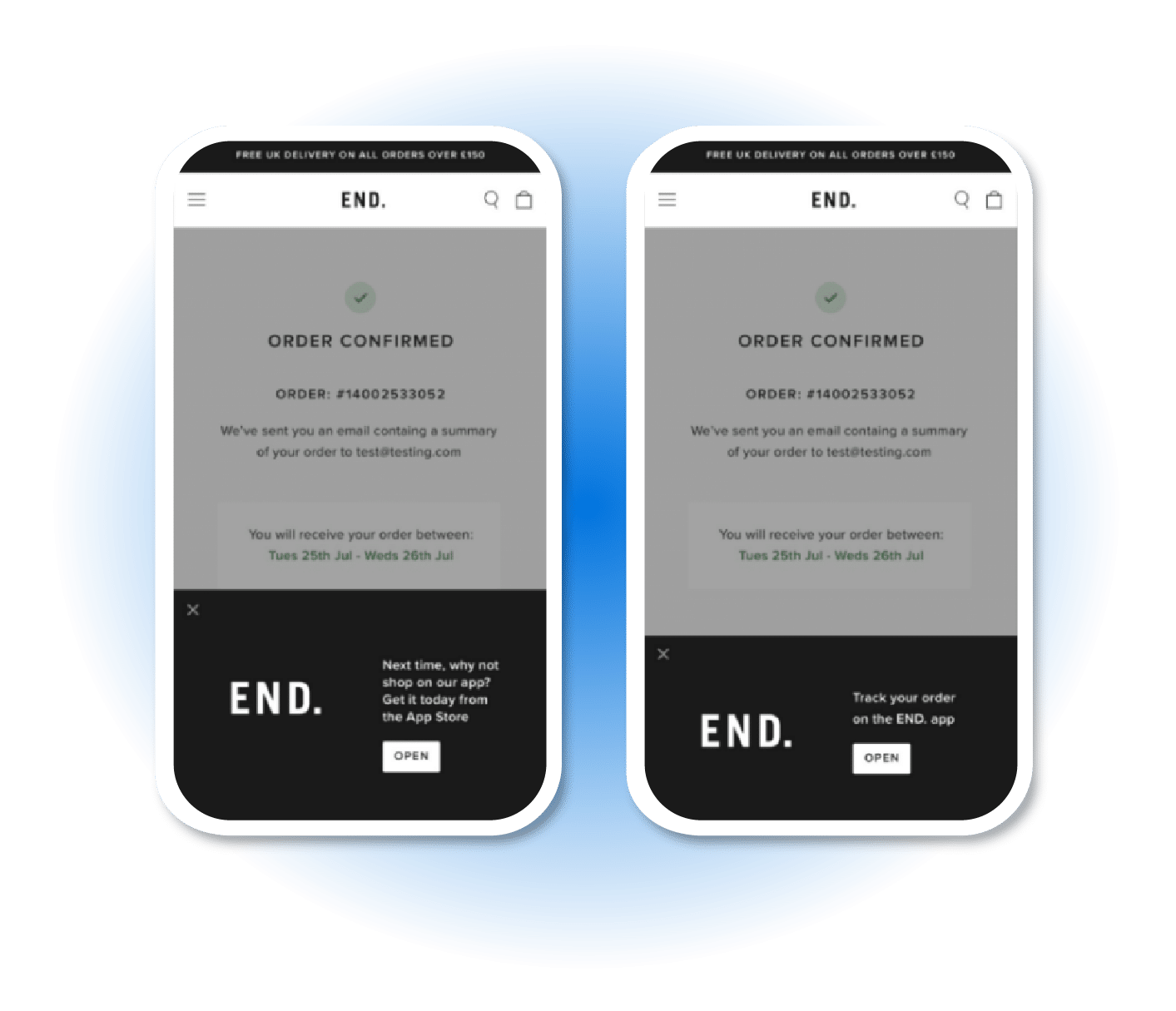

3. END., a leading fashion, sneaker, and design destination, focused on driving higher-quality app traffic from its mobile website. The team tested different smart banner formats, messaging, and placements, then quickly shut off what underperformed. By leaning into the combinations that drove installs and conversions, END. saw a 105% increase in app installs and 44% quarter-over-quarter revenue growth.

Operationalizing growth experiments (without the chaos)

Experimentation often starts scrappy. But to become a scalable growth engine, it needs operational rigor. That’s where growth ops comes in — the process layer that turns test ideas into repeatable outcomes.

When teams build systems around experimentation, they reduce chaos, speed up learning cycles, and close the gap between insight and execution. Practical ways to get there include:

- Running a weekly prioritization cadence across marketing and product

- Using a shared backlog and scoring model

- Tying every test to a core KPI, such as installs, conversions, engagement, or lifetime value LTV.

- Defining success thresholds and stop conditions up front

- Turning insights into playbooks or templates that teams can reuse

The goal isn’t higher test velocity. It’s higher test maturity.

Admit when something’s not working, and the sooner the better

One of the most powerful decisions a growth team can make is to stop a test. Knowing when to walk away is just as important as knowing when to double down. Without clear exit rules, teams waste time chasing marginal gains or waiting for signals that never come.

High-performing teams hit pause when:

- Results flatten after two to three weeks with no directional signal

- Execution friction outweighs potential upside

- The segment is too small to scale learnings

- The test isn’t tied to a strategic metric

Again, walking away isn’t failure. It’s focus.

Final thought: Strategic experimentation is a leadership trait

It’s easy to assume that more experiments lead to more growth. In reality, the strongest teams are selective. They prioritize ruthlessly, align tightly across teams, and recognize that abandoning a test can be a sign of maturity.

If you’re ready to turn experimentation into a true growth strategy, we can help. From deep linking and measurement to seamless user journeys, Branch helps teams test smarter, optimize faster, and scale what works.